News

This page contains the recent news of our group.

04.28.2026. OpenAI Podcast

I have been featured in the OpenAI Podcast episode titled What happens now that AI is good at math?

04.27.2026. Berkeley IEOR Seminar

Talk at the Berkeley IEOR Seminar titled Point Convergence of Nesterov’s Accelerated Gradient Method: An AI-Assisted Proof.

04.13.2026. Quanta Magazine

I have been featured in the Quanta Magazine article titled The AI Revolution in Math Has Arrived.

02.18.2026. Financial Times

I have been featured in the Financial Times article titled Artificial intelligence groups test capabilities of models with unsolved maths questions.

01.2026. OpenAI

I am joining OpenAI as a Member of Technical Staff while taking a leave of absence from UCLA.

07.22.2025. International Conference on Continuous Optimization

In-person talk at ICCOPT 2025.

07.07.2025. SNU Data Science Seminar

In-person talk at the Seoul National University Graduate School of Data Science Seminar.

05.2025. ICML paper

Our group has 1 papers accepted to ICML as an oral:

- LoRA Training Provably Converges to a Low-Rank Global Minimum or It Fails Loudly (But it Probably Won't Fail). J. Kim, J. Kim, and E. K. Ryu, International Conference on Machine Learning (Oral, top 120/12107=1.0% of papers), 2025.

04.28.2025. ICLR 2025 Workshop on Deep Generative Models

Our ICLR 2025 Workshop on Deep Generative Model in Machine Learning: Theory, Principle and Efficacy was a blast!

02.2025. Sloan Research Fellowship

I received the Sloan Research Fellowship.

02.27.2025. Princeton Optimization Seminar

Talk at the Princeton Optimization Seminar titled Toward a grand unified theory of accelerations in optimization and machine learning.

02.19.2025. UCSD Control-PI Seminar

Talk at the UCSD Control & Pizza seminar titled Optimization Algorithm Design via Electric Circuits.

01.2025. ICLR papers

Our group has 2 papers accepted to ICLR:

- Optimal Non-Asymptotic Rates of Value Iteration for Average-Reward Markov Decision Processes. J. Lee and E. K. Ryu, International Conference on Learning Representations, 2025.

- Encryption-Friendly LLM Architecture. D. Rho, T. Kim, M. Park, J. W. Kim, H. Chae, E. K. Ryu, and J. H. Cheon, International Conference on Learning Representations, 2025.

11.05.2024. JHU MINDS Seminar

In-person talk at the Johns Hopkins University Mathematical Institute for Data Science (MINDS) Seminar.

10.2024. NeurIPS papers

Our group has 2 papers accepted to NeurIPS, one as a spotlight:

- Optimization Algorithm Design via Electric Circuits. S. P. Boyd, T. Parshakova, E. K. Ryu, J. J. Suh, Neural Information Processing Systems (Spotlight, top 326/15671=2.1% of papers), 2024.

- Gradient-free Decoder Inversion in Latent Diffusion Models. S. Hong, S. Y. Jeon, K. Lee, E. K. Ryu, S. Y. Chun, Neural Information Processing Systems, 2024.

10.30.2024. UCLA Applied Math Colloquium

In-person talk at the UCLA Applied Math Colloquium.

10.2024. INFORMS Optimization Society Prize for Young Researchers

I received the INFORMS Optimization Society Prize for Young Researchers.

10.2024. INFORMS Annual Meeting

In-person talk at the 2024 INFORMS Annual Meeting.

Fall 2024. Joined UCLA

I joined UCLA Math as an assistant professor in fall 2024. I will dearly miss my time at Seoul National University.

09.2024. Sehyun: Youlchon AI Research Fellowship

Sehyun received the Youlchon AI Research Fellowship (율촌 AI 장학생).

07.2024. European Congress of Mathematics

I gave an in-person talk at the European Congress of Mathematics held in Seville, Spain.

07.08.2024. SAIT AI Society Seminar

I gave an in-person talk at the Samsung Advanced Institute of Technology (SAIT).

06.2024. Workshop at Erwin Schrödinger International Institute

I gave an in-person talk at the One World Optimization Seminar in Vienna hosted at the Erwin Schrödinger International Institute for Mathematics and Physics.

05.2024. ICML papers

Our group has 2 papers accepted to ICML, one as a spotlight and as an oral:

- Optimal Acceleration for Minimax and Fixed-Point Problems is Not Unique. T. Yoon, J. Kim, J. J. Suh, E. K. Ryu, International Conference on Machine Learning (Spotlight, top (144+191)/9473=3.5% of papers), 2024.

- LoRA Training in the NTK Regime has No Spurious Local Minima. U. Jang, J. D. Lee, and E. K. Ryu, International Conference on Machine Learning (Oral, top 144/9473=1.5% of papers), 2024.

05.2024. Jaewook: Rice Applied Math Postdoc

Jaewook will be joining the Rice University Computational Applied Mathematics and Operations Research Department as a postdoc in fall 2024. We wish Jaewook the best for his very promising academic career.

05.2024. TaeHo: Johns Hopkins Applied Math Postdoc

TaeHo will be joining the Johns Hopkins University Department of Applied Mathematics and Statistics as a postdoc in January 2025. We wish TaeHo the best for his very promising academic career.

05.2024. Jisun: Princeton ORFE Postdoc

Jisun will be joining the Princeton University Department of Operations Research and Financial Engineering as a postdoc in fall 2024. We wish Jisun the best for her very promising academic career.

05.31.2024. Yonsei University colloquium

I gave talk at the Yonsei University Department of Artificial Intelligence titled In Deep Learning, What Should Mathematical Theory Look Like?

05.28.2024. Gauss Colloquium

I gave a talk in the SNU Mathematics department Gauss Colloquium.

05.18.2024. KSIAM Outstanding Young Investigator Award Talk

I gave a talk at KSIAM Spring Conference for receiving the KSIAM Outstanding Young Investigator Award.

04.2024. Jaeyeon: Joining Harvard CS (Ph.D.)

Jaeyeon will be joining the Harvard CS Ph.D. program in Fall 2024. We wish Jaeyeon the best for his very promising academic career.

04.2024. Jinhee: Joining Stanford CME (Ph.D.)

Jinhee will be joining the Stanford CME (Applied Math) Ph.D. program in Fall 2024. We wish Jinhee the best for his very promising academic career.

04.20.2024. KMS Talk

I gave an in-person talk at Korean Mathematical Society Spring Meeting. (대한수학회 봄 연구발표회)

04.02.2024. KAIST Graduate School of AI Colloquium

I gave an in-person talk at the KAIST Graduate School of AI Colloquium.

03.2024. INFORMS Optimization Society Conference

I gave an in-person talk at the 2024 INFORMS Optimization Society Conference.

03.15.2024. SNU Stats Seminar

I gave an in-person talk at the SNU statistics colloquium.

03.12.2024. KAIST SAARC Colloquium

I gave an in-person talk at the KAIST Stochastic Analysis and Application RCenter (SAARC)Colloquium .

02.28.2024. KIAS Seminar

I gave an in-person talk at KIAS.

02.2024. Education Prize Award (SNU)

I received the Education Prize from the College of Natural Science of Seoul National University.

01.22.2024. U. Chicago Computational and Applied Math Colloquium

I gave an in-person talk at the University of Chicago Computational and Applied Math (CAM) Colloquium.

01.17.2024. UCLA Applied Math Colloquium

I gave an in-person talk at the University of California, Los Angeles Applied Math Colloquium.

01.2024. ICLR paper

Our group has 1 papers accepted to ICLR:

- Image Clustering Conditioned on Text Criteria. S. Kwon, J. Park, M. Kim, J. Cho, E. K. Ryu, and K. Lee, International Conference on Learning Representations, 2024.

23.11.27 Short talk at Berkeley's Simons Institute

I gave an in-person talk at Berkeley's Simons Institute for the Theory of Computing.

23.11 KSIAM Outstanding Young Investigator Award, 2023

I received the KSIAM Outstanding Young Investigator Award (젊은 연구자상).

23.10 NeurIPS papers

Our group has 4 papers accepted to NeurIPS:

- Censored Sampling of Diffusion Models Using 3 Minutes of Human Feedback. T. Yoon, K. Myoung, K. Lee, J. Cho, A. No, and E. K. Ryu, Neural Information Processing Systems, 2023.

- Time-Reversed Dissipation Induces Duality Between Minimizing Gradient Norm and Function Value. J. Kim, A. Ozdaglar, C.Park, and E. K. Ryu, Neural Information Processing Systems, 2023.

- Accelerating Value Iteration with Anchoring. J. Lee and E. K. Ryu, Neural Information Processing Systems, 2023.

- Continuous-Time Analysis of Anchor Acceleration. J. J. Suh, J. Park, and E. K. Ryu, Neural Information Processing Systems, 2023.

23.10 INFORMS Annual Meeting

I gave an in-person talk at the 2023 INFORMS Annual Meeting in Pheonix titled Computer-Assisted Design of Accelerated Composite Optimization Methods: OptISTA.

23.10.12 Stanford ISL Colloquium

I gave an in-person talk at the Stanford University Information Systems Laboratory (ISL) Colloquium.

23.10.03 Northwestern IEMS Seminar

I gave an in-person talk at the Northwestern University Industrial Engineering and Management Sciences (IEMS) department seminar.

23.09.29 CO Mines Applied Mathematics and Statistics Colloquium

I gave an in-person talk at the Colorado School of Mines Applied Mathematics and Statistics Colloquium.

23.09.27 Wisconsin–Madison SILO Seminar

I gave an in-person talk at the University of Wisconsin–Madison Systems-Information-Learning-Optimization Seminar.

23.08 Jinhee: KFAS Fellowship

Jinhee was selected to be part of the KFAS Fellowship (고등교육재단 장학금) 2024 cohort. The KFAS fellowship is one of the most prestigious fellowships in Korea, providing full financial support for five years of graduate studies.

23.08.25 UNIST Workshop Optimization & Machine learning

I will give a talk titled Toward a grand unified theory of accelerations in optimization and machine learning at the UNIST Workshop Optimization & Machine learning.

23.08.22 Tutorial on Diffusion Probabilistic Models

I will give a tutorial titled Diffusion Probabilistic Models and Text-to-Image Generative Models at Inha University.

23.08.07–08.08 Summer School on Mathematics of Deep Learning and AI

The SNU math department hosted the 10-10 Summerschool on Mathematics of Deep Learning and AI. I am main the organizer, and I gave a talk titled Toward a grand unified theory of accelerations in optimization and machine learning.

23.08.04 Terence Tao Lecture

The SNU math department hosted Professor Terence Tao to give a lecture titled A counterexample to the periodic tiling conjecture.

23.07.31–08.03 Samsung Global Research Symposium (GRS)

The Samsung Science & Technology Foundation hosted the Global Research Symposium (GRS) on Mathematical Theory of AI. I am part of the organizing board, and I will give a talk titled Toward a grand unified theory of accelerations in optimization and machine learning.

23.08.01 Tutorial on Diffusion Probabilistic Models

I gave a tutorial titled Diffusion Probabilistic Models and Text-to-Image Generative Models at the 정보 및 학습이론 단기강좌 hosted by 부호 및 정보이론 연구회.

23.06.28 ICIM tutorial

I gave a tutorial titled Stochastic Differential Equations + Deep Learning = Diffusion Probabilistic Models hosted by Workshop on Artificial Intelligence for Industrial Mathematics.

23.06 SIAM Conference on Optimization

I gave an in-person talk at the SIAM Conference on Optimization titled Non-Nesterov Acceleration Methods in First-order Optimization. Slides

23.06 Jaesung Park

Jaesung joined our research group as a Ph.D. student.

23.05 New paper with KRAFTON AI Research Center

Excited to announce our new paper Censored Sampling of Diffusion Models Using 3 Minutes of Human Feedback, which is joint work with the KRAFTON AI Research Center. This work is our first collaboration with an industry research lab, and it represents a new initiative to pursue experimental AI research alongside the theoretical work we have been doing. (This paper has no theorems or proofs!) This paper proposes a method for aligning diffusion probabilistic models with human preferences by utilizing minimal (~3 minutes) human feedback.

23.05 New paper with Asuman Ozdaglar's group of MIT

Excited to announce our new paper Time-Reversed Dissipation Induces Duality Between Minimizing Gradient Norm and Function Value, which is joint work with Jaeyeon Kim of my group and Chanwoo Park and Asuman Ozdaglar of MIT. This work presents a new duality principle between accelerated convex optimization algorithms for reducing function values and reducing gradient magnitude. This work is, to the best of our knowledge, the first instance of a duality of optimization algorithms (rather than optimization problems), and we are optimistic that this new discovery will open the door to many new interesting questions.

23.05 Hyunsik Chae

Hyunsik joined our research group as a M.S. student.

23.04 ICML papers

Our group has 2 papers accepted to ICML:

- Rotation and Translation Invariant Representation Learning with Implicit Neural Representations. S. Kwon, J. Y. Choi, and E. K. Ryu, International Conference on Machine Learning, 2023.

- Accelerated Infeasibility Detection of Constrained Optimization and Fixed-Point Iterations. J. Park and E. K. Ryu, International Conference on Machine Learning, 2023.

23.04 Soheun: Joining CMU Stats (Ph.D.)

Soheun will be joining the CMU Statistics Ph.D. program in Fall 2023. We wish Soheun the best for his very promising academic career.

23.04.26 UNIST AI Seminar

I gave a talk at the UNIST Artificial Intelligence Graduate Seminar titled 이론적 이해를 거부하는 딥러닝과 새로운 난제를 위한 새로운 이론을 건축하는 수학자들.

23.04 Stanford Course on Large-Scale Optimization

During Spring quarter, I will be teaching a course titled Large-Scale Convex Optimization: Algorithms and Analyses via Monotone Operators (EE 392F) at Stanford.

23.03.15–04.12 KSIAM Lecture Series

I gave a 5-part weekly lecture series titled Diffusion Probabilistic Models and Text-to-Image Generative Models hosted by KSIAM.

23.02.22. KSIAM-NIMS AI Winter School

I gave a tutorial titled Diffusion Probabilistic Models at the KSIAM-NIMS AI Winter School.

23.02 On Sabbatical at Stanford

I'm on sabbatical! I will spend my year at Stanford with my PhD advisor Stephen Boyd.

23.02 Exellent Research Award (SNU)

I received the Excellent Research Award from the College of Natural Science of Seoul National University.

23.01 Mathematical Programming 2022 Meritorious Service Award

I received the 2022 Meritorious Service Award from the journal Mathematical Programming.

22.12.03 International Conference on Matrix Theory with Applications to Combinatorics, Optimization and Data Science

I gave a talk at International Conference on Matrix Theory with Applications to Combinatorics, Optimization and Data Science titled Continuous-Time Analysis of AGM via Conservation Laws in Dilated Coordinate Systems.

22.11.18 Naver & Korean AI Association conference

I gave a talk at Naver & Korean AI Association conference titled Neural Tangent Kernel Analysis of Deep Narrow Neural Networks.

22.11.14 Korea University colloquium

I gave a talk at Korea University titled Infinitely Large Neural Networks.

22.11.07. Samsung Science & Technology Annual Forum

I gave a talk at Samsung Science & Technology Annual Forum 2022 titled 이론적 이해를 거부하는 딥러닝과 새로운 난제를 위한 새로운 이론을 건축하는 수학자들.

22.10.20. KMS Invited Speaker

I gave a talk at Korean Mathematical Society Annual Meeting titled Continuous-Time Analysis of AGM via Conservation Laws in Dilated Coordinate Systems. (대한수학회 가을 연구발표회)

22.10 INFORMS

I gave an in-person talk at the 2022 INFORMS Annual Meeting in Indianapolis titled Non-Nesterov Acceleration Methods in First-order Optimization.

22.10.07 Korean Women Mathematical Society Leaders Forum

I give an in-person talk at the Korean Women Mathematical Society Leaders Forum (한국여성수리과학회 리더스 포럼) titled Infinitely Large Neural Networks.

22.09.29 MIT ORC Fall Seminar

I gave an in-person talk at the MIT ORC Fall Seminar titled Non-Nesterov Acceleration Methods and Their Computer-Assisted Discovery Via the Performance Estimation Problem.

22.09 Uijeong Jang

Uijeong joined our research group as a Ph.D. student.

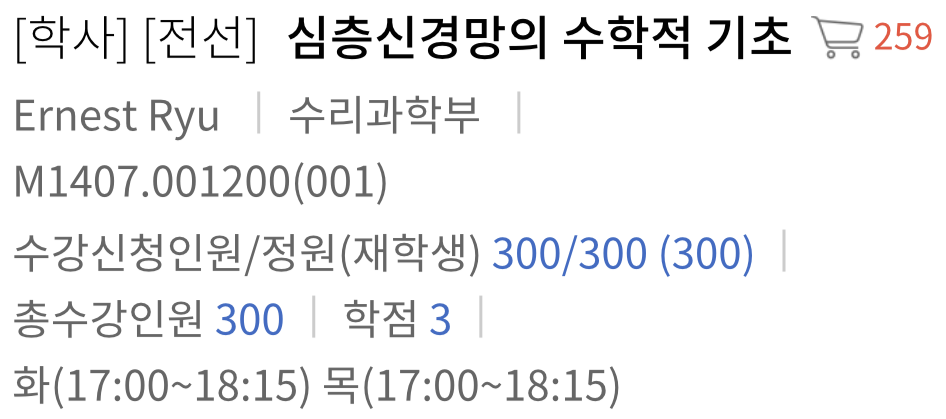

21.08.17 MathDNN enrollment reaches 300

Enrollment for Mathematical Foundations of Deep Neural Networks has reached 300 students! This is the largest enrollment of a single course on the SNU campus this semester. We have an exciting semester ahead of us.

22.08 Soheun: KFAS Fellowship

Soheun was selected to be part of the KFAS Fellowship (고등교육재단 장학금) 2023 cohort. The KFAS fellowship is one of the most prestigious fellowships in Korea, providing full financial support for five years of graduate studies.

22.08.11 KSIAM-MINDS-NIMS Conference

I gave a talk titled Continuous-Time Analysis of AGM via Conservation Laws in Dilated Coordinate Systems at the KSIAM-MINDS-NIMS International Conference on Machine Learning and PDEs.

22.08.03 SNU AI Summer School

I gave a talk titled NTK Analysis of Deep Narrow Neural Networks at the SNU AI Summer School.

22.08.01 Tutorial at Korean AI Association Summer Conference

I gave a tutorial titled Optimization for ML at the Korean AI Association Summer Conference.

22.07 Taeho and Jongmin: Youlchon AI Research Fellowship (율촌 AI 장학생)

Taeho and Jongmin received the Youlchon AI Research Fellowship (율촌 AI 장학생).

22.06 Jaewook and Jisun: Google Travel Grant

Jaewook and Jisun received the Google travel grant award to attend ICML 2022.

22.05 ICML papers

Our group has 3 papers accepted to ICML, 2 as long talks:

- Exact Optimal Accelerated Complexity for Fixed-Point Iterations. J. Park and E. K. Ryu, International Conference on Machine Learning (long presentation, top 118/5630=2% of papers), 2022.

- Continuous-Time Analysis of AGM via Conservation Laws in Dilated Coordinate Systems. J. J. Suh, G. Roh, and E. K. Ryu, International Conference on Machine Learning (long presentation, top 118/5630=2% of papers), 2022.

- Neural Tangent Kernel Analysis of Deep Narrow Neural Networks. J. Lee, J. Y. Choi, E. K. Ryu, and A. No, International Conference on Machine Learning, 2022.

22.05.28 KSIAM Tutorial

I gave a toturial at the KSIAM Spring Conference titled Infinitely Large Neural Networks.

22.05.27 POSTECH math colloquium

I gave a talk at the POSTECH math colloquium.

22.05.06 ASCC Tutorial

I gave a toturial at the Asian Control Conference titled Mathematical and practical foundations of backpropagation.

21.04 Chanwoo: Joining MIT EECS (Ph.D.)

Chanwoo will be joining the MIT EECS Ph.D. program in Fall 2022. We wish Chanwoo the best for his very promising academic career.

22.04.21. NIMS Colloquium

I gave a talk titled Neural Tangent Kernel Analysis of Deep Narrow Neural Networks at the NIMS Colloquium (산업수학 콜로퀴움).

22.03.31. Lecture for Recent Topics in Artificial Intelligence

I gave a lecture titled Infinitely Large Neural Networks for the class Recent Topics in Artificial Intelligence (최신 인공지능 기술) at SNU.

22.03 New PEP paper with Das Gupta and Parys of MIT

Excited to announce our new paper Branch-and-Bound Performance Estimation Programming: A Unified Methodology for Constructing Optimal Optimization Methods, which is joint work with Shuvomoy Das Gupta and Bart P.G. Van Parys of MIT. This work substantially generalizes the performance estimation problem, a computer-assisted methodology for finding first-order optimization methods, by utilizing branch-and-bound algorithms to solve non-convex QCQPs to certifiable global optimality.

22.02 AISTATS paper

Our group has 1 paper accepted to AISTATS

- Robust Probabilistic Time Series Forecasting. T. Yoon, Y. Park, E. K. Ryu, Y. Wang, International Conference on Artificial Intelligence and Statistics, 2022.

This paper was written in collaboration with Youngsuk Park and Bernie Wang of Amazon AWS AI Labs.

22.01 Sehyun: Outstanding TA Award

Sehyun received the Outstanding TA Award for his work for the Mathematical Foundations of Deep Neural Networks course in Fall 2021.

22.01 Donghwan Rho

Donghwan has joined our research group as a Ph.D. student.

22.01.14. 바이오영상신호처리 겨울학교

I gave a talk titled Plug-and-Play Methods Provably Converge with Properly Trained Denoisers in the Bio Imaging, Signal Processing Conference (바이오영상신호처리 겨울학교).

21.12 AI Times interview

I conducted an interview with the AI times. (article)

21.11.23. Gauss Colloquium

I gave a talk titled A Role of Mathematicians in Deep Learning Research in the Mathematics department's Gauss Colloquium.

21.10 1000 Citations

My Google Scholar profile has exceeded 1000 citations.

21.10 NeurIPS paper

Our group has 1 paper accepted to NeurIPS

- A Geometric Structure of Acceleration and Its Role in Making Gradients Small Fast. J. Lee, C. Park, and E. K. Ryu, Neural Information Processing Systems, 2021.

21.10.26. INFORMS Annual Meeting

I gave a talk at the 2021 INFORMS Annual Meeting in Anaheim.

21.10.21. KMS Invited Speaker

I gave a talk at Korean Mathematical Society Annual Meting as an invited speaker. (대한수학회 가을 연구발표회 응용수학 상설분과 발표)

21.10.14. PDE and Applied Math Seminar at UC Riverside

I gave a talk at the PDE and Applied Math Seminar at UC Riverside.

21.10.11. One World Optimization Seminar

I gave a talk at the One World Optimization Seminar. (Video)

21.10.01. The AI Korea 2021 Conference

I gave a talk at the The AI Korea 2021.

21.09 Chanwoo: KFAS Fellowship

Chanwoo was selected to be part of the KFAS Fellowship (고등교육재단 장학금) 2022 cohort. The KFAS fellowship is one of the most prestigious fellowships in Korea, providing full financial support for five years of graduate studies.

21.09.30. KMS Symposium for AI and University-Level Mathematics

I gave a talk at the KMS 2021 Symposium for AI and University-Level Mathematics.

21.08 Industrial and Mathematical Data Analytics Research Center (산업수학센터) interview

I conducted an interview with the student reporter of the Industrial and Mathematical Data Analytics Research Center (산업수학센터) of SNU. (article)

21.08.25. EIMS ICCM

I gave a talk at the 2021 EIMS (이화여대 수리과학연구소) International Conference on Computational Mathematics.

21.08.09. SNU AI Summer School

I gave a talk at the SNU AI Summer School. (Video)

21.06.25. CJK-SIAM Mini Symposium

I gave a talk at the (Chinese-Japanese-Korean)-SIAM Mini Symposium: Emerging Mathematics in AI at the KSIAM Spring Conference.

21.05 Visiting student: Shuvomoy Das Gupta

Shuvomoy (Ph.D. student, MIT ORC) will join our research group for the summer (May–Aug) of 2021 as a full-time visiting student.

21.05 Book publication

The book Large-Scale Convex Optimization via Monotone Operators by myself and Wotao Yin will be published by the Cambridge University Press, which is considered to be one of the most prestigious academic publishers.

21.05 ICML papers

Our group has 2 papers accepted to ICML, one as a long talk:

- Accelerated Algorithms for Smooth Convex-Concave Minimax Problems with $\mathcal{O}(1/k^2)$ Rate on Squared Gradient Norm. T. Yoon and E. K. Ryu, International Conference on Machine Learning, accepted as long presentation (top 166/5513=3% of papers), 2021.

- WGAN with an Infinitely Wide Generator Has No Spurious Stationary Points. A. No, T. Yoon, S. Kwon, and E. K. Ryu, International Conference on Machine Learning, 2021.

21.04.08 SNU math colloquium

I gave a talk at the SNU math colloquium.

21.04.01 KAIST math colloquium

I gave a talk at the KAIST math colloquium.

21.04 Samsung Science & Technology Foundation grant

The grant proposal titled A Theory of the Many Accelerations in Optimization and Machine Learning (기계 학습과 최적화 알고리즘의 가속에 대한 통합 이론) was accepted for funding by the Samsung Science & Technology Foundation. The SSTF grant is considered to be the most prestigious research grant in Korea. I am honored and grateful for the awared and the support. 삼성미래기술육성재단 (No. SSTF-BA2101-02) (중앙일보 article)

21.03 Amazon Web Services AI Labs intern: TaeHo Yoon

TaeHo will intern at the AWS AI Labs for the summer of 2021 (and therefore will be temporarily away from our research group).

21.03 Joo Young Choi

Joo Young has joined our research group as a Ph.D. student.

20.12.(14,22) Bielefeld, Germany Lecture Series: Mathematics of Infinitely Wide Neural Networks

I gave a two-part lecture series on the Mathematics of Infinitely Wide Neural Networks for the Bielefeld-Seoul International Research Training Group 2235.

20.11.25 Variational Analysis and Optimisation Webinar

I gave a talk at the Variational Analysis and Optimisation Webinar, organized by the Australian Mathematical Society. (Video)

20.11.19 SKKU (성균관대학교) math colloquium

I gave a talk at the SKKU math colloquium titled Minimax Optimization in Machine Learning.

20.11 Jaewook Suh

Jaewook has joined our research group as a Ph.D. student.

20.10.24 KMS Invited Speaker

I gave a talk at the Korean Mathematical Society Annual Meting as an invited speaker. (대한수학회 가을 연구발표회 응용수학 상설분과 발표)

20.10 Jongmin Lee

Jongmin has joined our research group as a Ph.D. student.

20.09.02 Yonsei University math colloquium

I gave a talk at the Yonsei math colloquium titled Minimax Optimization in Machine Learning.

20.08 Sehyun Kwon

Sehyun has joined our research group as a M.S. student.

20.06 TaeHo Yoon

TaeHo has joined our research group as a Ph.D. student.

20.06 Jisun Park

Jisun has joined our research group as a Ph.D. student.

20.05 National Research Foundation grant

The grant proposal titled Utilizing Minimax Game Structures to Efficiently Train General Adversarial Networks (최소최대 게임 구조를 활용한 적대 신경망의 효율적 훈련) was accepted for funding by the National Research Foundation. I am grateful for the support. (기본연구 No. 2020R1F1A1A01072877)

20.03 Moved to SNU

I have joined the Department of Mathematical Sciences at Seoul National University as a tenure-track assistant professor.

19.12 Left UCLA

I have left the Department of Mathematics at UCLA to move to Seoul, Korea. I will dearly miss the collaboration with Wotao Yin.